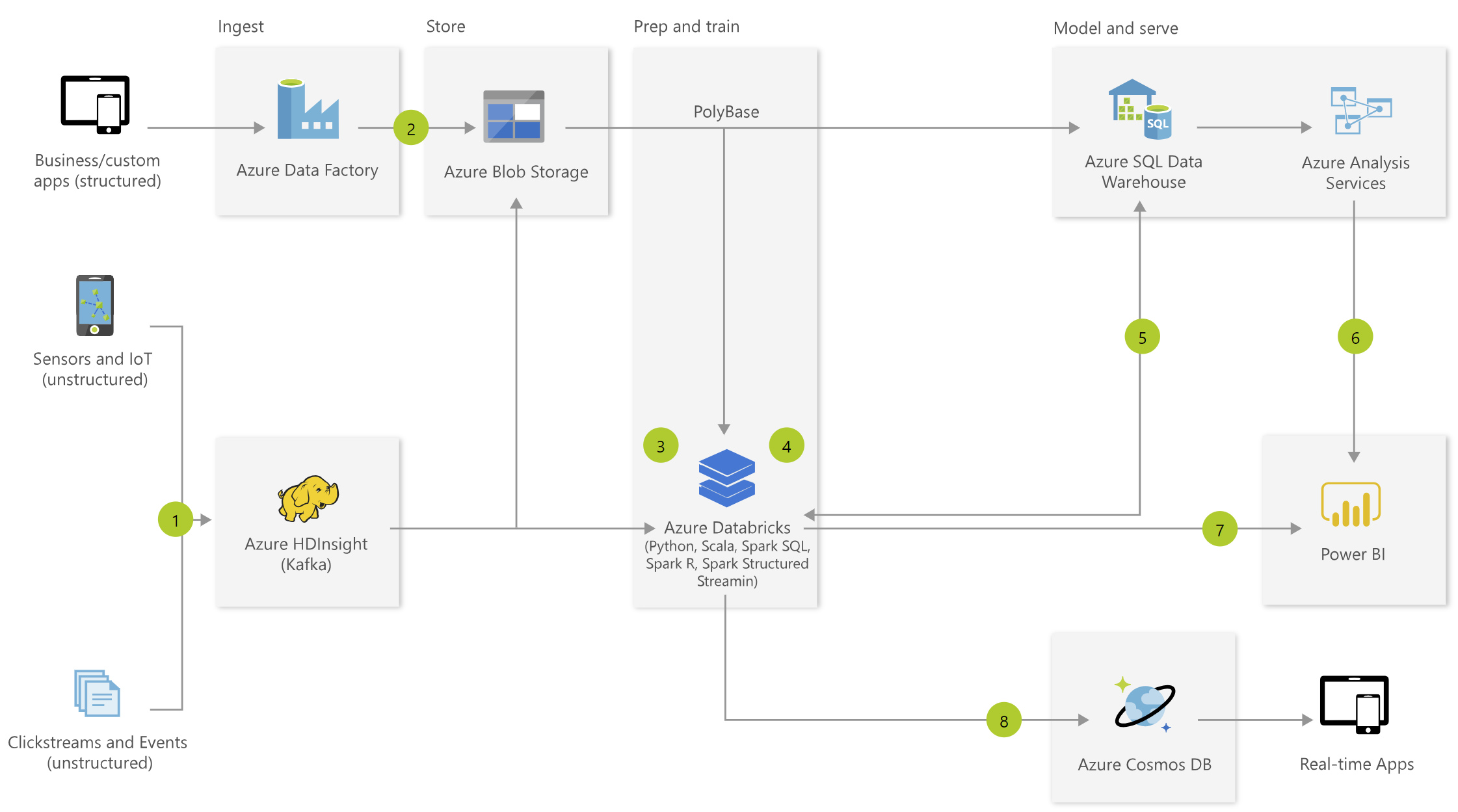

Microsoft Azure reference architectures, such as the diagram displayed below, can be very helpful when planning an implementation:

Although we have best practices and common practices, there is rarely just one right answer. Nearly every technology present on an architecture diagram has a valid alternative. It is great to have a lot of “tools in the toolbox” though the wide number of choices can lead to some uncertainty. Following are a few high-level considerations for when you are evaluating which services to use in Azure.

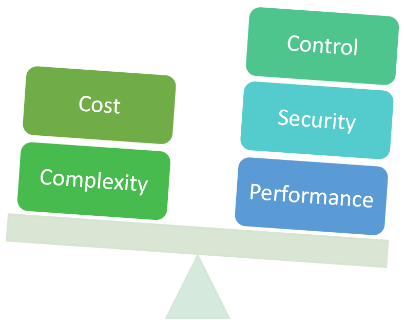

1: Be aware of your goals for going to the cloud, and the tradeoffs involved

When deciding on architecture components, there are constantly tradeoffs and decisions to be made around aspects such as cost, control, complexity, performance, and security. The choices you are faced with during the decision-making process and during implementation will become easier if you have a firm handle on your objectives for cloud solutions.

Considerations:

- Are you willing to incur additional cost for increased performance?

- Are you willing to take on more complexity in exchange for an increased level of control?

- Are you willing to accept reduced agility for greater security?

2: Realistically assess what your team can support

When an architecture is composed of numerous technologies, it can be a challenge to support, especially if you have a small team. In the diagram above, there are eight distinct services (not including other aspects such as Azure Active Directory, virtual networks, and so forth). Each service has its own characteristics, languages, tools, and best practices. There are always preferences from team members as to which skillsets they can utilize (or things they want to learn), which can significantly influence the services that are selected.

Considerations:

- Who will build and support the solution?

- Does your team have existing expertise, or will they be expected to learn new capabilities quickly?

- Do you have personnel available to handle activities such as capacity planning, performance tuning, and cost management?

3: Decide the extent to which your team follows best-fit engineering principles

The above diagram also shows Azure Databricks which is a compute service targeted towards data engineering and data science processes. Because Azure Databricks supports multiple languages (SQL, Python, R, Scala), it is seeking to satisfy multiple use cases and multiple user demands with one “unified” tool. The simplification aspect is certainly an appealing proposition.

Considerations:

- Does your team value best-fit engineering over architectural simplicity?

- Is your team prepared to support numerous layers?

- Can you justify the use of every service selected in the architecture?

4: Decide your preferences for data integration vs. data virtualization techniques

Although we do not have a full-fledged data virtualization platform in the Microsoft ecosystem, we do have various data virtualization techniques available to us. For instance, PolyBase and Elastic Queries can both execute remote queries to another data storage solution. We can also use DirectQuery or Live Connections in Power BI to avoid redundant data involving duplicative data refresh operations. As the number of data storage services in an end-to-end architecture increases, your options for potentially using data virtualization techniques increase as well.

Considerations:

- Do you have concerns about redundant copies of data?

- Do you need alternatives for closer to real-time data access?

- Are you required to meet data residency or compliance requirements?

5: Routinely conduct a technical proof of concept during the decision-making process

It is common for new features to be introduced as an MVP, known as a minimally viable product. This approach allows vendors like Microsoft to obtain feedback from early adopters and prioritize the incremental improvements. From the customer perspective, this is advantageous because we see new features and functionality quicker. However, it also means that it takes time for features to mature which means that key functionality may not be available initially. For this reason, it is wise to do a proof of concept to reduce risk to the project. This is particularly true if you are planning to utilize an unfamiliar service or a relatively new service.

The fast pace at which services evolve in Azure makes it very challenging to keep up with the current state of capabilities. All services incrementally improve over time, such as Power BI which introduces new functionality every month. Some services also undergo larger, more fundamental changes which involve a migration, such as Azure Data Factory V2 or Azure Data Lake Storage Gen 2.

Considerations:

- Do your processes and team structure support working in a manner which starts small and iterates?

- How receptive is your team to learning new things on a regular basis?

- Is your team prepared to stay current and adapt to the pace of change?

At BlueGranite, we thoroughly enjoy helping customers make these types of decisions. Contact us today and we’ll be happy to assist in getting you through the process.